Hello there! As the title hints, this post is about solving false alerts being generated in SCOM for non-existing clustered VMs / resources.

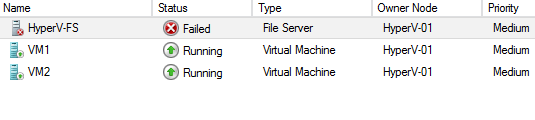

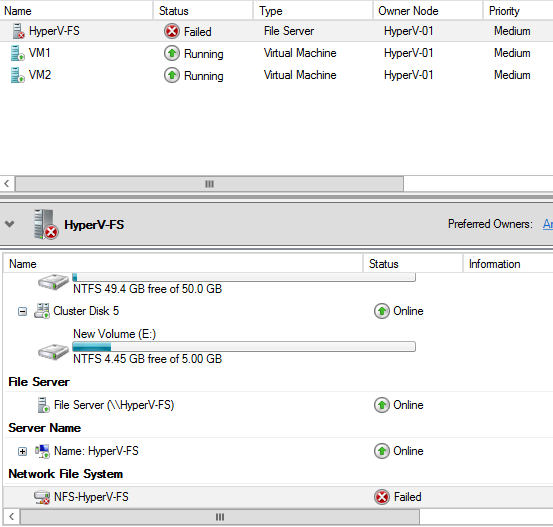

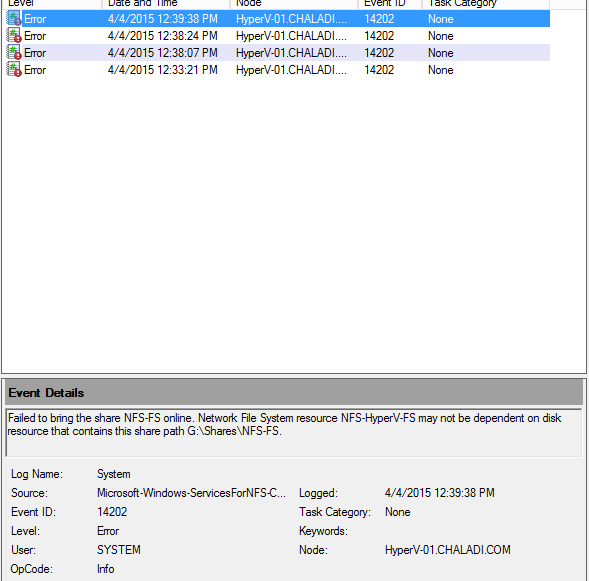

I have recently come across a situation where in SCOM I see lot of false alerts generated for hyper-v 2012 r2 clustered resources; reporting VM resource groups are in critical state. However the VMs are deleted from cluster, so the cluster resource monitoring MP should monitor only what actual resources exist on the cluster. “Alert monitor” generated alerts for deleted cluster resources must be closed manually in SCOM as the monitor keeps checking about the non-existing resource to see it state change update., and even after doing this, the alerts for deleted VMs keeps coming back in console.

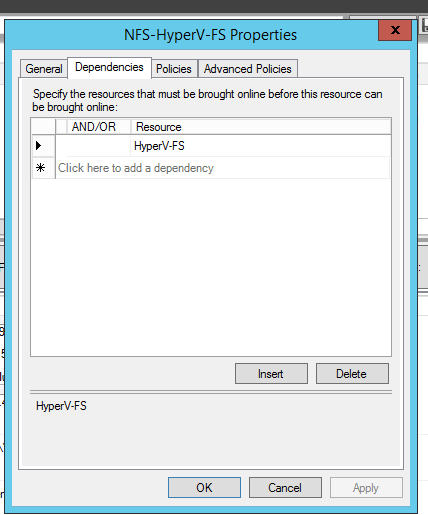

The whole problem started when couple of cluster nodes in hyper-v cluster are set in Maintenance mode for some activity and the hosts were shutdown as part of process, and during this period, couple of VMs were deleted using VMM management server, and those VMs were gone from cluster as expected, however SCOM picked data from online cluster nodes and was not able to pick data about the deleted VMs from offline cluster nodes. When the shutdown hyper-v hosts were brought online, SCOM started behaving weird, it is still thinking deleted VMs are with the shutdown hyper-v hosts and generated lot of false alerts for deleted VMs in SCOM reporting VMs are in critical state.

At this point, the data with the SCOM in its database is inconsistent. There is no way to remove a clustered resource from cluster management pack view dashboard. We can only place the resource group in MM.

To solve this bug / data inconsistent behavior with SCOM, cluster monitoring Management packs must be deleted and we have to import the cluster monitoring management packs again – this needs to be done when all cluster node are brought back online and active in the cluster, so MPs can pick the data from all Hyper-v nodes.

Any custom management packs created depending on the cluster MPs needs to be exported from Administration view of SCOM and after deleting all Cluster MPs, and re-importing MPs back in SCOM along with custom MPs will fix this issue. It will take about 1 hour or more to pick / update status of clusters.